The New Data Engineer Interview: What Companies Are Testing in 2026

Executive summary (answer-first):

- In 2026, the “new” data engineer interview is less about tool name‑dropping and more about proving implementation: building reliable pipelines, writing production-grade SQL, and explaining trade-offs.

- Companies are tightening validation because candidate fraud and AI-assisted responses dilute signal, pushing more live, job-simulated assessments and deeper probing.

- Expect testing across SQL + modeling, pipeline/system design, reliability/operations, and cloud/platform + governance, with AI policy awareness increasingly relevant.

- Salary context (US benchmarks) suggests high competition; comparisons vary by source and methodology, so always specify base vs total comp.

Assumptions (explicit):

- Target audience: beginners and career switchers.

- Geography: global; when salary data is used, it is U.S.-based unless stated otherwise.

- If a company-specific detail is missing: Unspecified. If compensation detail is unclear: Depends on location, company, and skills.

The new data engineer interview in 2026 is a tighter, more verification-heavy process that emphasizes applied delivery—because AI has changed both productivity and the reliability of traditional screening signals.

You’ll learn what companies are testing now (and why), the most common assessment formats, a skills matrix you can prep against, and a practical strategy to pass interviews without inventing metrics or relying on vague “familiar with X” claims.

Quick summary: The 2026 data engineer interview tests applied skills: SQL, pipeline building, system design trade-offs, reliability/operations, and cloud/data-platform fundamentals. Motion notes hiring is more specialized and validation is harder due to AI and candidate fraud, so expect more probing and practical exercises.

Key takeaway: Motion says candidates must show implementation, not familiarity, and notes rising candidate fraud. Combine that with HackerRank reporting broad AI use and plagiarism concerns, and the interview shifts toward live validation: explain your work, debug, and demonstrate real pipeline thinking.

Quick promise: You’ll get a 2026-ready interview blueprint: format comparisons, a skill-by-assessment matrix, a “what good looks like” checklist, and salary-source context for data engineering salaries 2026. Use it to build a prep plan that produces verifiable proof, not guesses.

What changed in the data engineer interview in 2026

In 2026, companies are testing trustworthy, applied implementation more than memorized trivia, because AI adoption and fraud have weakened resume keyword and take-home signals.

The interview is “new” because validation and specialization matter more, and many steps are designed to confirm you actually built what you claim.

Three forces reshaping interviews (2026):

- AI reshapes productivity and evaluation. HackerRank reports near-universal AI assistant usage among developers in its 2025 report, and its 2024 report release highlights strong AI usage and recruiter concerns about plagiarism.

- Fraud and bots flood top-of-funnel. Motion explicitly calls out a surge in candidate fraud and notes scammers/bots increasing risk and slowing validation.

- Specialization wins. Motion states entry-level/generalist demand slowed, while specialized roles saw stronger growth; employers want evidence of applied expertise and AI fluency.

What “implementation, not familiarity” means in practice (how you’re tested):

- You’re asked to walk through decisions: data model, partitioning, incremental logic, failure modes.

- You’re expected to show work products: deployed projects, portfolios, or certifications as proof.

- Assessments trend toward job-simulated tasks. HackerRank’s 2025 report argues hiring should test the actual job and notes developers want fewer algorithm-heavy tests and more real-world work samples.

Assessment format comparison (what’s common and what it measures):

| Interview step | What it tests | “New in 2026” angle | What good looks like |

|---|---|---|---|

| Recruiter screen | Role fit, communication, constraints | More verification questions due to fraud (project provenance, specifics) | Clear story + specific artifacts |

| SQL / data task | Transformations, correctness, performance intuition | More emphasis on job-like SQL tasks vs trivia | Correct + readable + explains trade-offs |

| Coding / hands-on task | Pipeline logic, Spark/Kafka basics, working code | Platforms recommend hands-on tasks | Small, working solution with tests or checks |

| Data system design | End-to-end pipeline architecture | More focus on reliability, governance, cost | Requirements → design → failure modes |

| Behavioral / incident | Ownership, debugging, stakeholder management | “Explain your thinking” to validate authenticity | Postmortem-style clarity + trade-offs |

(HackerRank explicitly recommends hands-on tasks and lists core DE skills typically assessed, including SQL, Spark, Kafka, Hadoop, and language basics.)

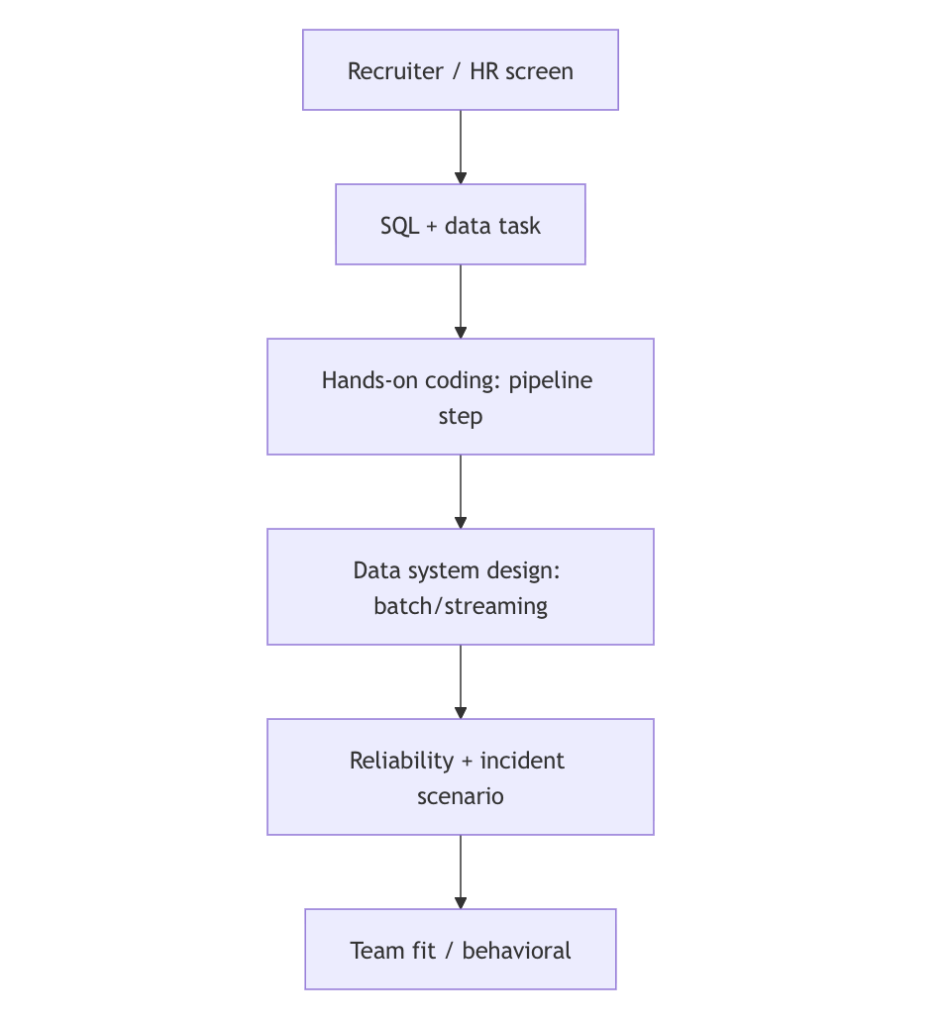

Typical 2026 interview loop (high-level workflow):

Why competition is high (salary context, US-only sources):

| Source | What it reports for data engineering salaries 2026 (US) | Notes |

|---|---|---|

| PayScale | Avg base $99,876; median $100k; 10–90% base $71k–$142k (updated Feb 25, 2026) | Self-reported salary profiles |

| Glassdoor | Avg $132,300; typical $103,634–$170,643; based on 32,427 salaries (as of March 2026) | Aggregated submissions + modeling (Glassdoor) |

| Built In | Avg base $125,983; total comp $150,234 | Separates base vs additional cash |

| Levels.fyi | Median total comp $155,000 | Must attribute to Levels.fyi (Levels.fyi) |

| Motion (salary guide page) | Provides mid/senior starting salary ranges by city and role | Ranges vary by location |

If you’re interviewing outside the US: Depends on location, company, and skills.

What companies test first in 2026

Companies most often test SQL and practical data transformation first, because SQL remains universally useful for pipelines, warehouses, and debugging.

This early stage is designed to quickly separate “can build” from “can talk.”

Signals companies seek in SQL rounds (what “good” looks like):

- Correctness under realistic constraints (duplicates, late records, nulls).

- Readability: CTEs, clear naming, predictable grain.

- Basic performance intuition (filters early, avoid accidental cartesian joins).

Evidence of what’s commonly assessed (from major assessment platforms and job-market sources):

- HackerRank lists SQL (Intermediate) as a core assessed skill for multiple DE profiles (e.g., PySpark/JavaSpark/ScalaSpark).

- PayScale’s DE page lists common skills such as SQL, ETL, AWS, Spark, Azure, Hadoop (skill tags on the role page).

Practical SQL question patterns (examples; adapt to your target role):

- “Compute rolling 7-day active users, deduplicate events, and explain late-arriving data handling.”

- “Design a fact table grain, then write the query that produces a KPI with filters and joins.”

- “Find anomalies: missing foreign keys, out-of-range measures, or freshness failures.”

Data modeling is increasingly explicit, not implied.

- Glassdoor’s DE interview page frames the job as turning raw data into usable data by building pipelines and systems—modeling is how those systems stay coherent.

Mini skills matrix (SQL + modeling):

| Skill | How it shows up | What interviewers probe |

|---|---|---|

| Join correctness | Multi-table metrics | Grain, keys, duplicates |

| Windowing / time | Trends, sessions, retention | Ordering, partitioning, late data |

| Modeling | Facts/dimensions, entities | Grain, SCD approach, constraints |

What companies test in system design and pipeline architecture

Companies test end-to-end pipeline design because data engineering is fundamentally about turning raw inputs into reliable downstream outputs (tables, analytics, ML features).

In 2026, this round is often the main differentiator: you must explain trade-offs and failure handling, not just draw boxes.

What “system design” means in a data engineering loop (2026):

- Batch vs streaming choices and why.

- Storage layout (warehouse vs lakehouse patterns are common, but company-specific is unspecified).

- Data contracts and schema evolution strategy.

- Backfills, idempotency, and operational ownership.

Evidence-based “what gets tested” anchors:

- HackerRank explicitly describes hands-on tasks that assess Spark transformations and Spark job writing, plus Kafka architecture concepts such as partitions/brokers/producers/consumers.

- Glassdoor’s DE interview page highlights building pipelines and data systems as core to the role.

A “new 2026” expectation: show your reasoning live.

- Motion says hiring managers face more fraud and need proof; candidates stand out with deployed projects and clear implementation evidence.

- HackerRank’s 2025 report argues assessments should reflect real work, not purely algorithm-heavy tests.

“Golden” trade-offs companies probe (say them explicitly):

- Correctness vs latency vs cost vs operational complexity.

- Exactly-once vs at-least-once semantics (company expectations vary: unspecified).

- Schema enforcement vs flexibility.

Practical design prompt examples (common, not universal):

- “Design a pipeline for clickstream events to power a dashboard and anomaly alerts.”

- “Build a CDC feed from OLTP to analytics tables with updates and deletes.”

- “Design a lakehouse table layout for incremental processing and queries.”

What companies test in reliability, quality, and operations

In 2026, companies test operational thinking—quality checks, idempotent reruns, monitoring, and incident response—because “trusted pipelines” are a core business risk area.

This is also where career switchers can outperform: reliability is learnable through disciplined projects and runbooks.

Why this is bigger now (verified signals):

- Motion explicitly says scammers/bots increase risk and make validation difficult; employers rely more on trusted pipelines and deliberate verification.

- BLS’s database roles emphasize secure, efficient, backed-up systems and permissions—operational responsibilities that overlap strongly with modern data engineering expectations.

What reliability is tested through (common formats):

- “An on-call scenario”: job failed, data is late, downstream dashboard broken.

- “A backfill scenario”: bug discovered, need to recompute historical partitions.

- “A data quality scenario”: duplicates, null spikes, referential integrity breaks.

Operational skill checklist (what interviewers want to hear):

- Detection: What metric/alert catches it first?

- Diagnosis: Where do you look (logs, lineage, query plan)?

- Containment: Stop the bleed (pause schedule, isolate bad partitions).

- Fix + prevention: Add test/constraint, improve schema checks, write runbook.

Reliability and governance skills matrix:

| Competency | Example test | Evidence anchor |

|---|---|---|

| Security/access | “Who should see PII? How do you enforce roles?” | BLS notes DBAs plan security measures and update permissions |

| Backup/restore mindset | “How do you prevent data loss?” | BLS includes backup/restore as core duty |

| Monitoring | “How do you detect late/failed jobs?” | Motion emphasizes trusted pipelines in a fraud-heavy funnel |

What not to do (high rejection risk in 2026):

- Vague answers like “I’d just rerun it” without idempotency/backfill strategy.

- Claiming metrics you didn’t measure (do not invent).

- Ignoring security/privacy questions when the role handles sensitive data.

What companies test about cloud, platform, and AI fluency

Companies increasingly test cloud/platform fundamentals and AI-aware workflow because specialization and AI fluency are tied to mobility and hiring success, and because practical skills must be validated under new constraints.

This doesn’t mean everyone needs the same cloud stack; it means you must explain your choices and show deployable thinking.

Cloud/platform topics that surface early (evidence-backed indicators):

- Glassdoor’s DE interview page includes a question about experience with Hadoop and cloud data management environments, implying cloud familiarity is a common interview theme.

- PayScale lists cloud and big-data skills (e.g., AWS, Azure, Spark, Hadoop) as common skills associated with the role.

AI fluency: what companies can reasonably test (without assuming policy):

- Can you explain when you would use AI assistance vs when you must validate manually?

- Can you critique AI-generated SQL/pipeline logic and find edge cases?

- Can you work under an “open book” or “no AI” policy if required (company policy: unspecified)?

Why AI policy matters (verified context):

- HackerRank’s 2024 report release highlights that many developers use AI to code and many talent acquisition professionals worry about AI-related plagiarism.

- Motion highlights candidate fraud and verification difficulty.

- HackerRank’s 2025 report calls for assessment redesign toward job-relevant evaluation.

If the interviewer asks “Do you use AI?” (safe, high-signal answer pattern):

- “Yes, where allowed: I use it for drafting options and speeding up boilerplate.”

- “I validate outputs: tests, edge cases, query plans, and data checks.”

- “If AI is restricted, I follow policy and narrate my reasoning clearly.”

Preparation without invented timelines (sequence, not duration):

- Build one end-to-end project you can demo live.

- Practice SQL under time constraints and explain decisions aloud.

- Rehearse two system designs (batch analytics + streaming/CDC).

- Prepare one incident story using a postmortem structure (what happened, impact, fix, prevention).

FAQ

What are companies testing in data engineer interviews in 2026?

They’re testing applied implementation: SQL transformations, pipeline architecture, reliability/operations, and platform fundamentals. Motion emphasizes “implementation, not familiarity,” and highlights fraud/verification pressure, which pushes interviews toward practical demos and deeper probing. Expect job-simulated tasks and clear explanations of trade-offs.

How has AI changed the data engineer interview in 2026?

AI changes both productivity and hiring signal. HackerRank reports broad AI usage among developers and strong recruiter concern about AI-related plagiarism, while Motion highlights candidate fraud and verification difficulty. As a result, companies often prefer live reasoning, job-like tasks, and probing questions that validate understanding, not just output.

Do companies still give take-home projects in 2026?

Yes, but the trend is toward assessments that better reflect the job and include stronger validation. HackerRank recommends hands-on tasks for data engineering skills, and its 2025 report argues assessments should match real work rather than just what’s easiest to measure. Company policies vary: unspecified.

Is SQL still the most important interview skill in 2026?

Yes—SQL is still a universal screen because it’s the common language of transformations, debugging, and analytics outputs. HackerRank’s DE assessments include intermediate SQL, and PayScale lists SQL as a core skill associated with the role. You’ll also be evaluated on clarity and correctness, not only syntax.

What does “system design” mean for a data engineer interview?

It usually means designing an end-to-end pipeline: ingestion, storage, transformation, serving, monitoring, and backfills. Glassdoor frames the role around turning raw data into usable data via pipelines and systems. Strong answers include trade-offs (correctness/latency/cost) and failure handling.

How do I prepare for reliability and incident questions without production experience?

Focus on learnable artifacts: idempotent reruns, data quality checks, logs/metrics, and a runbook. BLS describes database work emphasizing security, backups, and efficient operation—use those operational concepts to structure your answers. Don’t invent incident metrics; describe process and prevention.

Will cloud skills be tested in every 2026 interview?

Not always, but cloud familiarity appears frequently. Glassdoor’s DE interview questions include cloud data management, and PayScale lists AWS/Azure among common skills associated with the role. If you lack one cloud, you can still pass by explaining deployable design patterns and trade-offs. Depends on location, company, and skills.

How should I answer questions about AI use in interviews?

Answer policy-first: ask what’s allowed, then describe your workflow. HackerRank reports strong TA concern about AI plagiarism, and Motion highlights fraud pressure, so ethical clarity matters. Explain how you validate AI outputs (tests, edge cases, query plans) and how you work without AI if required.

How competitive are data engineer interviews in 2026?

They can be competitive due to specialization and compensation. Motion reports hiring slowed for entry-level/generalist roles and emphasizes specialization; salary benchmarks from PayScale, Glassdoor, Built In, and Levels.fyi show high pay but vary by methodology. Use multiple sources and specify base vs total comp.

What’s the safest portfolio to bring into a 2026 interview?

Bring one end-to-end pipeline repo that you can demo: diagram, reproducible run, SQL models, data quality checks, orchestration, and a short runbook. Motion says deployed projects and portfolios help candidates stand out, especially as employers prioritize proof and verification.

One-minute summary

- 2026 interviews prioritize implementation and verification due to AI and candidate fraud pressures.

- Expect screens on SQL + modeling, then pipeline/system design, then reliability/operations, plus cloud/platform fundamentals.

- Hiring signal shifts toward job-simulated tasks over algorithm-heavy trivia.

- Use salary sources carefully: base vs total comp varies across PayScale/Glassdoor/Built In/Levels/Motion.

- If unclear: Depends on location, company, and skills.

Key terms

The New Data Engineer Interview 2026, data engineer interview 2026, what companies test for data engineers, data engineering interview SQL, data engineering system design interview, data pipeline interview, data quality interview, data observability interview, data engineering salaries 2026, Motion 2026 Tech Salary Guide, PayScale data engineer salary 2026, Glassdoor data engineer salary March 2026, Built In data engineer total compensation, Levels.fyi data engineer median salary, candidate fraud hiring 2026, AI in interviews